How Google Gemma 4 is Set to Redefine Local AI Performance and Developer Accessibility

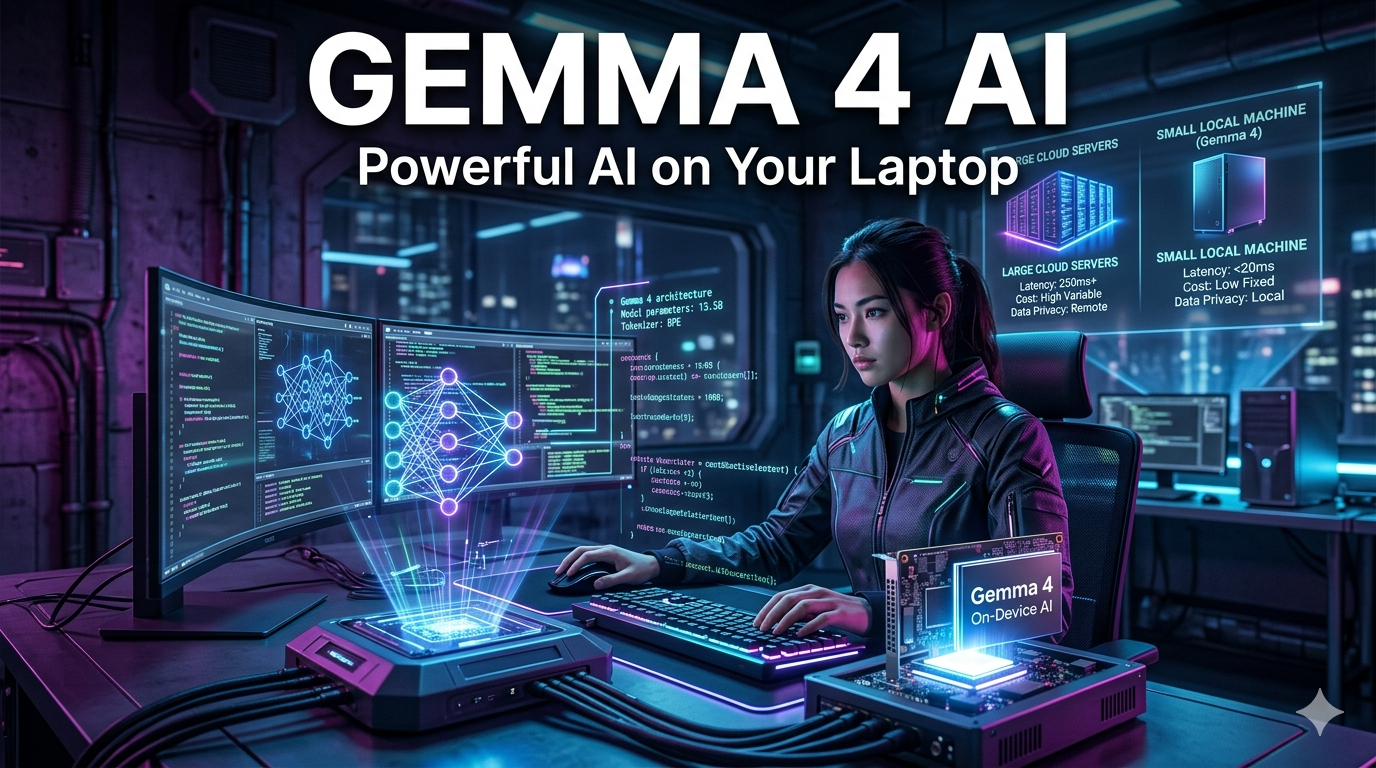

The landscape of Artificial Intelligence is shifting. While massive, closed-source models like Gemini Ultra and GPT-4 once dominated the conversation, the focus has pivoted toward open-weight models that offer privacy, speed, and customization. Leading this charge is the Gemma 4 AI project—Google’s next-generation framework designed to bring state-of-the-art performance to everyday hardware.

In this guide, we dive deep into the rumors, technical expectations, and the revolutionary potential of Google Gemma 4.

What is Google Gemma 4?

Google Gemma 4 is the projected evolution of Google’s “Gemma” family of lightweight, open-weight models. Built from the same technology and research used to create the Gemini models, Gemma 4 is designed specifically for the developer community and researchers who require high-performance AI that can run locally on workstations or in cost-effective cloud environments.

Unlike its predecessors, Gemma 4 is expected to leverage advanced “distillation” techniques, where the massive intelligence of Gemini 2.0 or 3.0 is compressed into a smaller, more efficient architecture without losing significant reasoning capabilities.

Key Features Expected in Gemma 4 AI

As AI hardware improves, Google is expected to push the boundaries of what “small” models can do. Here are the anticipated features of the Gemma 4 release:

- Multimodal Integration: While previous versions focused heavily on text, Gemma 4 is expected to be natively multimodal, handling text, code, and vision tasks simultaneously.

- Massive Context Windows: We expect Gemma 4 to support context lengths of up to 128k or even 256k tokens, allowing developers to process entire libraries of code or long documents locally.

- Unmatched Efficiency: Using “sliding window attention” and optimized KV caching, Gemma 4 will likely run on consumer-grade GPUs with as little as 12GB to 16GB of VRAM.

- Advanced Reasoning: Google is focusing on “chain-of-thought” processing, making Gemma 4 significantly better at math, logic, and complex coding tasks compared to Gemma 2.

Google Gemma 4 vs. The Competition

The open-source AI space is crowded with giants like Meta’s Llama series and Mistral. How will Google Gemma 4 stand out?

| Feature | Google Gemma 4 | Meta Llama 4 (Projected) | Mistral Next |

| Architecture | Gemini-derived Distillation | Dense/MoE | Optimized Transformer |

| Hardware Focus | TPU & NVIDIA Optimized | General GPU | Edge Device Focus |

| Integration | Google Cloud / Vertex AI | Ecosystem Neutral | Euro-centric Cloud |

| Primary Strength | Logical Reasoning & Coding | General Knowledge | Speed/Latency |

Technical Specifications: What’s Under the Hood?

While official specs are under wraps, industry analysts predict that Google Gemma 4 will likely be released in three distinct sizes to cater to different needs:

- Gemma 4 – 2B: Optimized for mobile devices and edge computing.

- Gemma 4 – 9B: The “sweet spot” for developers, balancing high intelligence with the ability to run on a single consumer laptop.

- Gemma 4 – 27B+: A powerhouse designed to rival GPT-4 level performance while remaining open-weight for enterprise fine-tuning.

People Also Ask (Google PAA Integration)

Is Google Gemma 4 free to use?

Yes, like previous versions, Google Gemma 4 is expected to be released under a permissive license that allows for both commercial and academic use, though it remains “open-weight” rather than fully “open-source.”

How does Gemma 4 AI compare to Gemini?

Gini is a closed-model service accessible via API or web interface, utilizing massive computing clusters. Gemma 4 is a “distilled” version of that intelligence, optimized to be downloaded and run on your own hardware for privacy and lower latency.

What are the system requirements for Google Gemma 4?

For the 9B parameter model, you will likely need a GPU with at least 12GB of VRAM (like an NVIDIA RTX 3060/4070). For the 2B model, a modern smartphone or a standard MacBook with M-series chips will suffice.

Can Gemma 4 write code?

Absolutely. One of the primary use cases for the Gemma family is coding assistance. Gemma 4 is expected to feature superior Python, Rust, and C++ capabilities, often outperforming much larger models in specialized benchmarks.

Why Developers are Switching to Gemma 4 AI

The shift toward Google Gemma 4 isn’t just about performance; it’s about the ecosystem.

- Native Keras Integration: Gemma 4 integrates seamlessly with Keras 3, JAX, PyTorch, and TensorFlow, making fine-tuning straightforward.

- Safety First: Google implements rigorous “Responsible AI” filters at the weights level, reducing the risk of harmful outputs—a major requirement for enterprise deployment.

- Low Latency: For applications like real-time chatbots or gaming NPCs, running Gemma 4 locally eliminates the “round-trip” delay of calling a cloud API.

Future Implications: The Road to AGI on your Desktop

The release of Gemma 4 AI marks a significant milestone in the democratization of AI. By providing the public with models that were once considered “super-intelligent” only a year ago, Google is fueling a new wave of localized innovation.

We are moving toward a world where a developer can build a fully autonomous, private AI agent that lives entirely on their hardware, powered by the backbone of Google’s research.

Conclusion: Preparing for the Google Gemma 4 Era

As we look toward the official launch, now is the time for developers to familiarize themselves with the Google AI Edge ecosystem. Whether you are looking to build a private research assistant or a specialized coding tool, Google Gemma 4 will undoubtedly be the engine behind the next generation of AI applications.